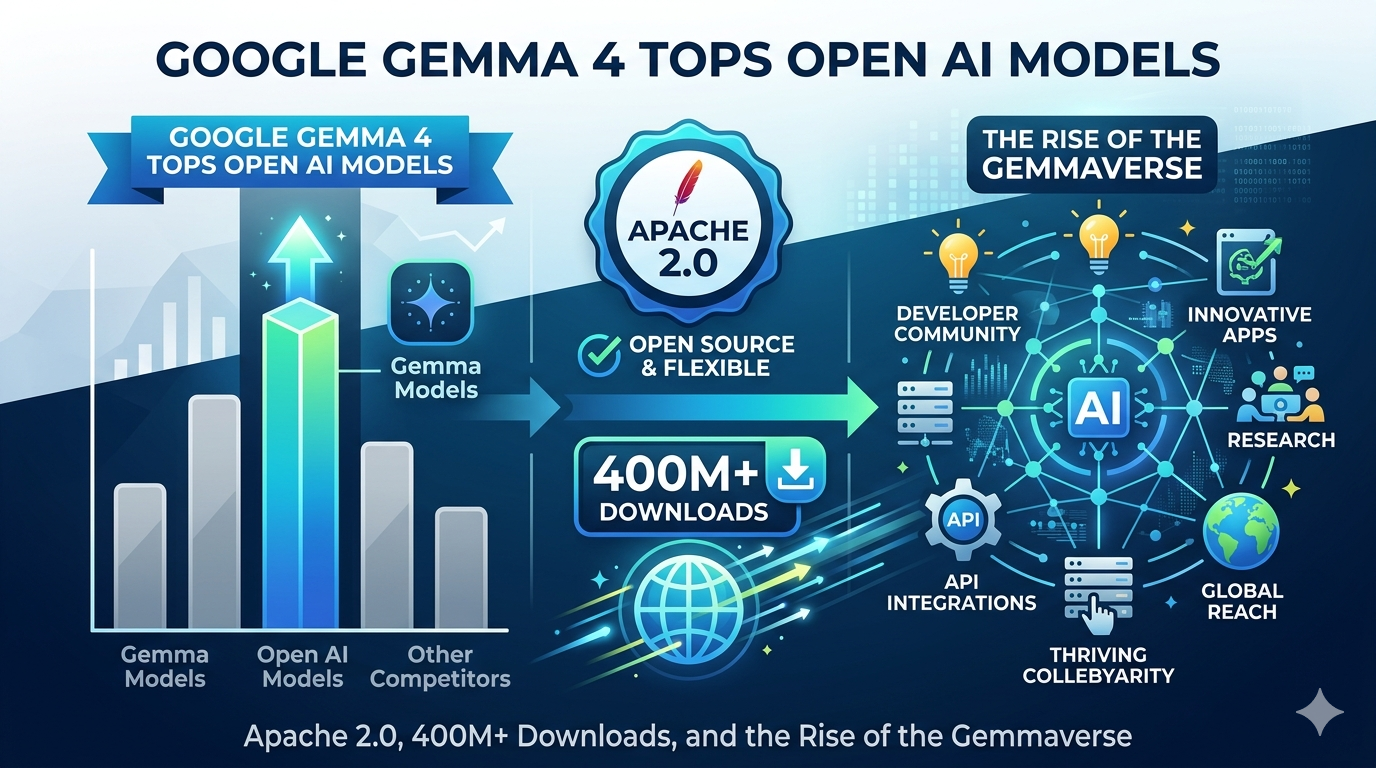

Something shifted in the open AI model race on April 2, 2026. Google released Gemma 4 — and this time, the license actually matters as much as the benchmark scores. For the first time in the Gemma family's history, the entire model lineup ships under the Apache 2.0 license. No custom usage policies. No buried termination clauses. No legal review friction for enterprise teams. Just download, modify, and build.

For developers who have spent the last two years watching Meta's Llama and Alibaba's Qwen gain mindshare partly because of their more permissive licensing, this is a significant moment. Google is not just releasing a better model. It is removing the biggest structural obstacle to commercial adoption of its open model ecosystem.

Why the Apache 2.0 License Change Is the Real Story

Previous versions of Gemma were available as open weights, meaning developers could download and run them — but the custom Gemma Terms of Use came with restrictions. The license could be updated unilaterally by Google, required developers to propagate Google's rules to all Gemma-based projects, and contained clauses that could potentially extend to models trained using Gemma-generated synthetic data. Enterprise legal teams hated it. Many simply avoided the model entirely, defaulting to Llama or Mistral where the Apache 2.0 terms were already well understood.

Gemma 4, released on April 2, 2026, ships under the Apache 2.0 license — the first time Google has ever done this for the Gemma family. There are no custom usage policies, no termination clauses buried in the fine print, and no legal review friction. That structural shift does not get erased by the next benchmark update — it is what makes Gemma 4 a genuinely different proposition for enterprise adoption.

This open-source license provides a foundation for complete developer flexibility and digital sovereignty — granting you complete control over your data, infrastructure, and models.

Google, Gemma 4 Official Launch Blog, April 2026What Are the Four Gemma 4 Models?

Google launched Gemma 4 in four sizes to meet different environmental criteria. The smaller 2-billion- and 4-billion-parameter "Effective" models are intended for edge devices such as smartphones, while the 26-billion-parameter mixture-of-experts and 31-billion-parameter dense models can be deployed in more compute-intensive workloads.

| Model | Parameters | Target Hardware | Context Window | Standout Benchmark |

|---|---|---|---|---|

| E2B | ~2B | Phones, Raspberry Pi, IoT | 128K | Offline speech recognition on mobile |

| E4B | ~4B | Smartphones, Jetson Nano | 128K | 42.5% AIME 2026, 4x faster than Gemma 3 |

| 26B MoE | 26B total / 4B active | Consumer GPU workstations | 256K | 88.3% AIME 2026, fits single H100 |

| 31B Dense | 31B | Developer workstations, cloud | 256K | 89.2% AIME 2026, #3 Arena.ai globally |

Performance That Punches Far Above Its Weight

The 31B model scored 89.2% on AIME 2026 and 86.4% on agentic tau2-bench, up from 20.8% and 6.6% in the previous Gemma 3 generation. LiveCodeBench competitive coding jumped from 29.1% to 80.0%. Agentic tau2-bench performance leaped from 6.6% to 86.4%, a more than 13-fold improvement indicating the models can handle complex, multi-step tool use that predecessors could not perform at production quality.

The 26B MoE model is particularly interesting for production workloads. It uses a Mixture of Experts architecture where only 4B parameters activate per query, keeping inference costs low while delivering near-frontier results. Google said the 26B MoE and 31B Dense models provide more intelligence per parameter and outcompete much larger models to achieve frontier-level capabilities with significantly less hardware overhead. State of the art features can run on a single 80GB Nvidia H100 GPU.

Real-World Applications Already Running on Gemma

The Gemmaverse is not just a marketing term. It represents a genuine ecosystem of specialized models built on Google's open architecture. INSAIT created a pioneering Bulgarian-first language model (BgGPT), and Google worked with Yale University on Cell2Sentence-Scale to discover new pathways for cancer therapy, among many others. Gemma is also powering sovereign digital infrastructure, from automating state licensing processes in Ukraine to scaling multilingual AI across India's 22 official languages through Project Navarasa.

The Competitive Landscape: Honest Trade-offs

Gemma 4's launch day was also Alibaba's release day for Qwen 3.6-Plus, which offers a 1 million token context window compared to Gemma 4's 256K maximum. Meta's Llama 4 Scout is already in production with a 10 million token context. Where Gemma 4 wins clearly: human preference scores on Arena AI, scientific reasoning benchmarks, and edge deployment capabilities. Where it faces real competition: Qwen's vastly larger context window and Llama 4's long-document applications. Developers are not picking a single winner — they are making specific trade-offs based on deployment environment and use case.